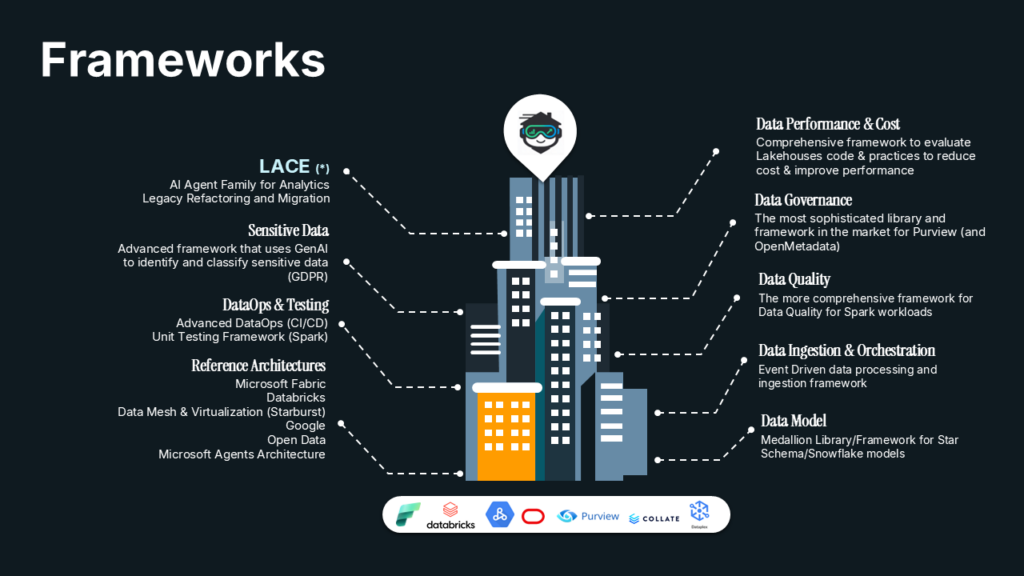

LACE is an AI-powered modernization framework built to help organisations understand, de-risk, and accelerate the transformation of legacy data platforms. It combines specialised copilots and agents to analyse legacy code, extract business rules, and generate the foundations for modern target architectures.

Modernising legacy estates is rarely just a migration challenge. It is a knowledge challenge. Business logic is deeply embedded in ageing systems, documentation is incomplete, and dependencies are often invisible. LACE addresses that complexity directly by turning legacy assets into structured, actionable intelligence.

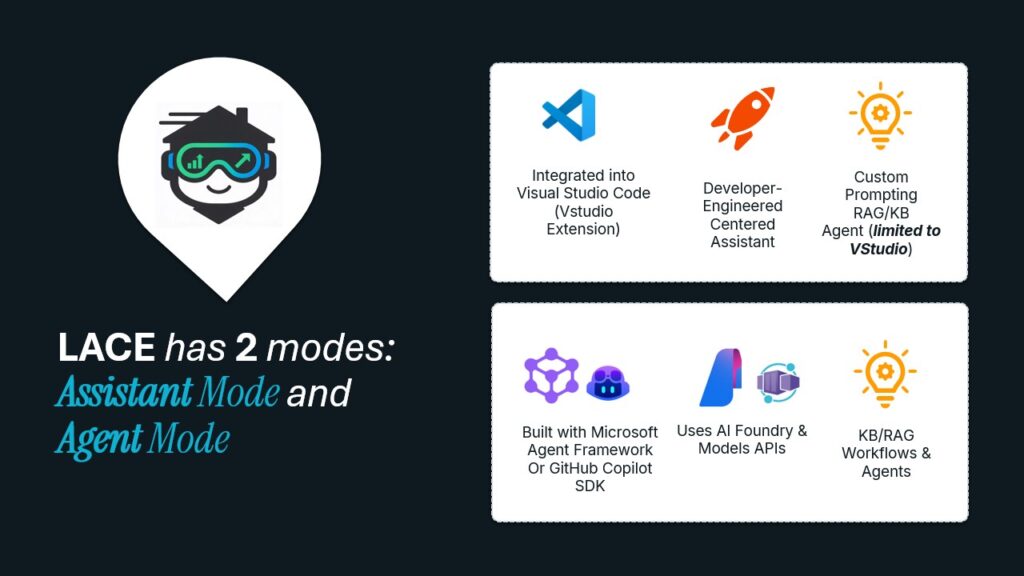

With support for Assistant Mode and Agent Mode, LACE helps teams move from code understanding to transformation design and execution with greater speed, consistency, and engineering confidence.

Understand legacy

Assistant mode

A developer-centred experience for understanding, analysing, and preparing legacy transformation.

Agent mode

A structured path from analysis to generated modernization outputs.

Platform and scope

Designed for modern engineering teams and adaptable to different legacy estates.

On February 26th and 27th, the CMVM (Comissão do Mercado de Valores Mobiliários) hosted an international workshop titled “SupTech: Empowering Supervision,” gathering financial supervisory authorities and leading technology providers. The event, part of CMVM’s Supertech Strategic Plan funded by the European Union, aimed to strengthen the institution’s data collection, processing, and analytical capabilities in the supervisory sphere.

During the workshop, national authorities presented relevant use cases and shared experiences, discussing the latest trends and developments in SupTech. Topics such as document analysis, web scraping, supervision information systems, and data platform management were explored, providing insights into the comprehensive view of supervised entities and technical implementation.

On the second day, bilateral meetings were held to further discuss the discussions from the first day, while technology companies showcased their latest solutions.

Among the participating technology providers was Link Consulting, which leveraged its expertise in the SupTech Strategic Plan. Link Consulting highlighted AI and GenAI use cases focusing on Documentation, Risk, Information Extraction, and Classification in its presentation. It also emphasized the critical success factors in AI and GenAI adoption, addressing technical and organizational aspects.

CMVM expressed gratitude for the valuable contributions of all participants, including Link Consulting, and acknowledged the European Union’s and other partners’ support in organizing the event.

CMVM expressed gratitude for the valuable contributions of all participants, including Link Consulting, and acknowledged the European Union’s and other partners’ support in organizing the event.

February, 2024

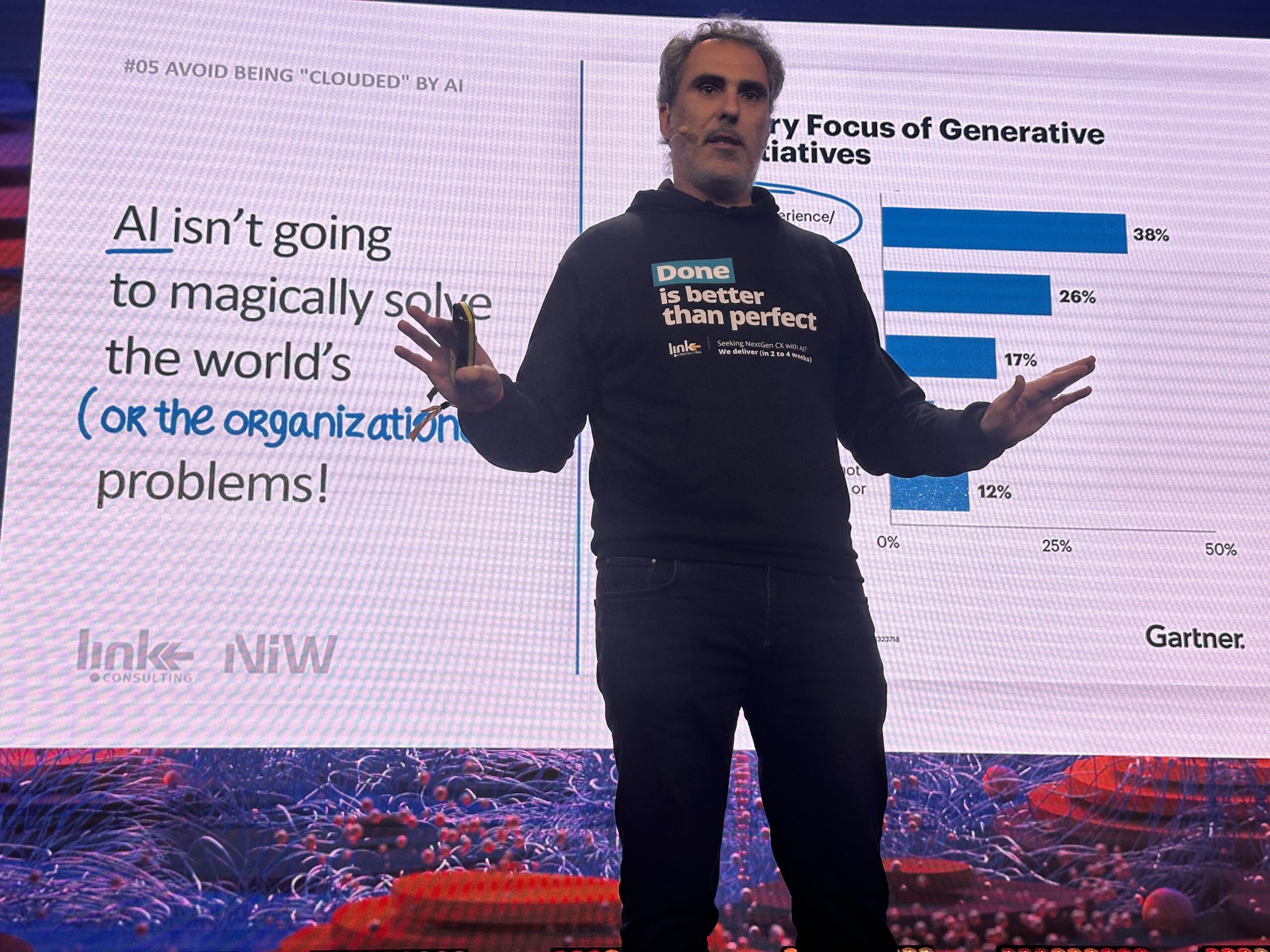

During the Building the Future session, remarkable advancements in artificial intelligence (#AI) were showcased, with inspirational and practical examples of how AI will change our lives. Link Consulting has been at the forefront of developing business solutions that help our clients position themselves as innovation pioneers by leveraging AI.

A standout collaboration between Link Consulting and Grupo Salvador Caetano, specifically in #GenAI, allowed us to craft highly innovative business solutions. These are designed to be production-ready and implemented within a 2- to 4-week time frame, ensuring a lightning-fast time to market while guaranteeing a set of business KPIs in a highly efficient and cost-effective way.

Security, privacy, and confidentiality are top priorities in these solutions developed by Link Consulting, providing clients with peace of mind and compliance with regulations.

📞 If you want to learn more about these innovations and how they can drive your business forward, contact us! We’re ready to share our expertise and help you navigate the exciting world of artificial intelligence. Together, we can create a brighter and more innovative business future.

November 2023 – Starburst, the data lake analytics platform, and Link Consulting, a leading information technology consultancy, are pleased to announce a strategic partnership to accelerate innovation and technological excellence in data lake analytics. This collaboration aims to provide businesses with the tools and capabilities needed to drive data analytics initiatives, regardless of their stage in the cloud journey.

Link, a leading provider of innovative data management solutions, is excited to announce a strategic partnership with Starburst, the Enterprise version of open source Trino, the world’s fastest distributed SQL query engine for Data Mesh and Virtualization.

This collaboration marks a significant milestone in the data management landscape, combining Link’s expertise in comprehensive Data & AI solutions with Starburst’s cutting-edge technology to empower organizations with unparalleled insights through fast access to distributed data.

The partnership aims to address the growing challenges faced by businesses in managing and analyzing vast amounts of data and their heterogeneity.

By combining Link’s expertise in Data & AI (Redglue) with Starburst’s technology, a seamless and powerful solution that accelerates cloud adoption and data democratization, especially for large and complex organizations, will be delivered.

Both Link and Starburst express their enthusiasm about this collaborative venture and its potential impact on the Data Mesh & Virtualization landscape.

According to Luis Marques, head of Data & AI at Link: “Link is thrilled to join forces with Starburst to set new standards in Data Mesh & Virtualization by combining our expertise in comprehensive Data & AI with the unparalleled speed and efficiency of Starburst’s technology”.

About Starburst

For data-driven companies, Starburst offers a full-featured data lake analytics platform, built on open source Trino. Our platform includes the capabilities needed to discover, organize, and consume data without the need for time-consuming and costly migrations. We believe the lake should be the center of gravity, but support accessing data outside the lake when needed. With Starburst, teams can access more complete data, lower the cost of infrastructure, use the tools best suited to their specific needs, and avoid vendor lock-in. Trusted by companies like Comcast, Grubhub, and Priceline, Starburst helps companies make better decisions faster on all their data.

“We are delighted to welcome Link Red Glue as our very first Consulting Partner in Portugal. Link Red Glue’s domain and technical expertise will support the demand for Starburst in Portugal, ultimately helping more customers to make better decisions with fast access to all their data and accelerate their time to value.” by Toni Adams – Senior Vice President, Alliances

About Link:

Link is a leading information technology consulting company based in Portugal. With a strong focus on innovation, Link provides strategic advice and cutting-edge technology solutions to help organizations navigate digital transformation challenges and create long-term value in meaningful partnerships with our customers.

For further information please visit www.linkconsulting.com

This partnership between Starburst and Link Consulting represents an advancement in digital transformation and a commitment to the most innovative and efficient data analytics practices. Both companies are excited to join forces to develop innovative solutions and elevate excellence in analytical techniques.

For more information about this partnership, please visit www.starburst.io and www.linkconsulting.com.

Data security is essential for any organization that handles sensitive information. At its core, data security involves three key elements: confidentiality, integrity, and availability.

Confidentiality ensures that only authorized users can access sensitive data.

Integrity ensures that the data is stored in reliable structures.

Availability ensures that the data is readily accessible for ongoing business needs in a secure approach.

Data Security Best Practices

Accessing the Risks

Establish Security and Awareness Culture

Understand OnPremises and Cloud Security

Comply to Regulations

Wisely Adopt Technologies

Know Where is the Critical Data to Protect

Data breaches, failed audits, and regulatory violations can all result in reputational damage, fines, and legal consequences. Moreover, protecting sensitive data is critical to ensuring that customer trust is maintained.

Sensitive data includes personally identifiable information, financial information, health information, and intellectual property. As a consulting company, we understand the importance of knowing exactly where your data is located in your IT infrastructure and continuously monitoring data access profiles.

We deliver solutions that not only comply with mandatory regulations like GDPR but also track how your data is accessed, preventing unauthorized access and detecting any changes or corruption. With our comprehensive data security solutions, you can rest assured that your organization’s sensitive information is protected from potential threats.

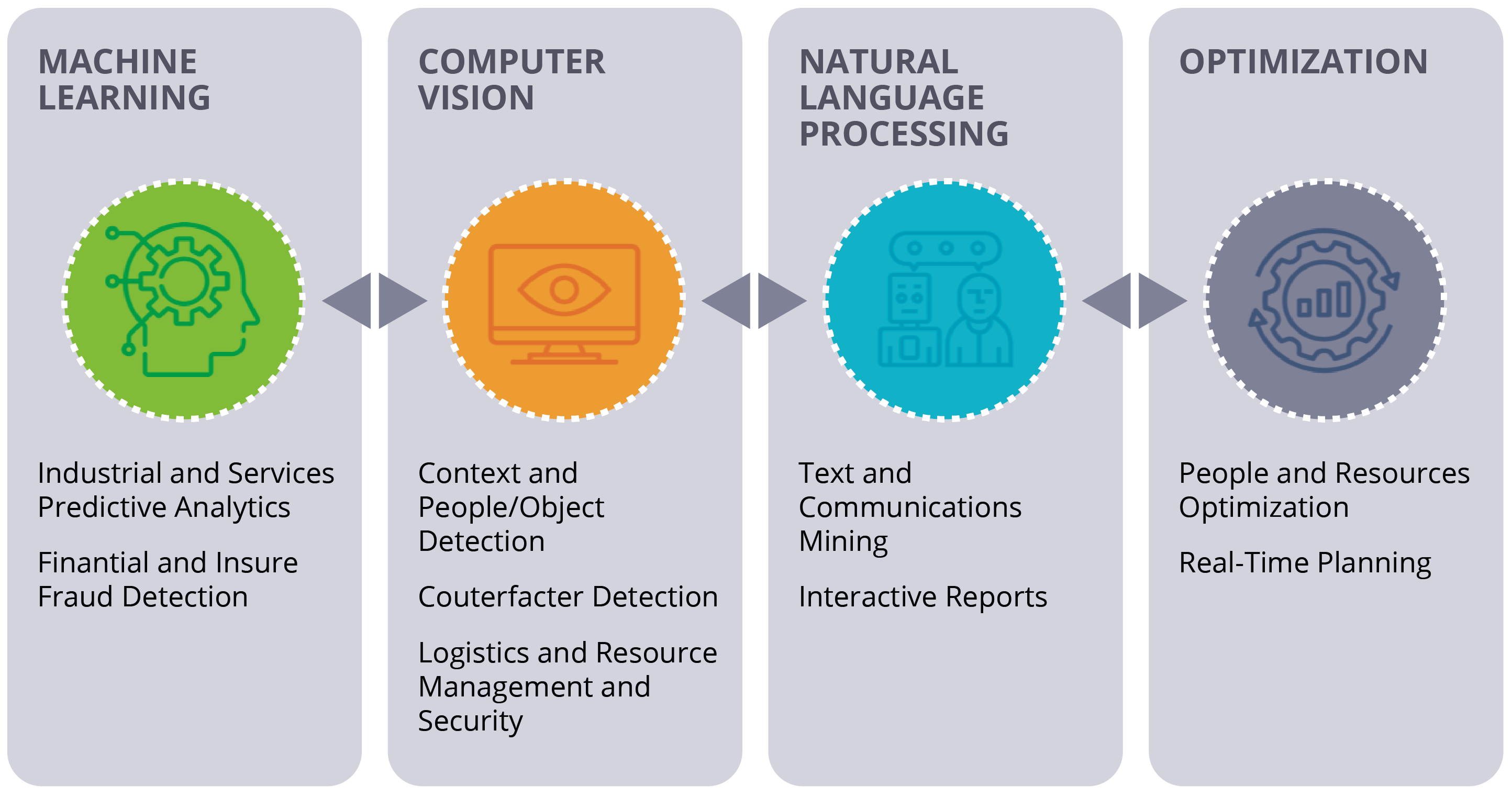

Artificial Intelligence (AI) technologies leverage modern computational resources, hardware, and software to simulate human intelligence processes. Some common AI applications include recommendation systems, natural language processing, speech recognition, gaming engines, and machine vision.

AI can significantly reduce the time and effort required to complete tasks and can operate around the clock without interruptions or breaks. While AI was originally used to replace non-expert workers and processes, it is now increasingly being used to augment the capabilities of expert individuals in organizations.

At our consulting company, we understand that IT projects and solutions require human expertise to leverage the best technology, whether it’s a GPT-3 engine or a more classical Random Forest Machine Learning approach.

We are excited about new trends like Edge AI and Adaptive AI, which enable us to create a more symbiotic world. With Adaptive AI, we can combine methods such as agent-based design with AI techniques like reinforcement learning to enable systems to better adapt to the continuously changing real-world.

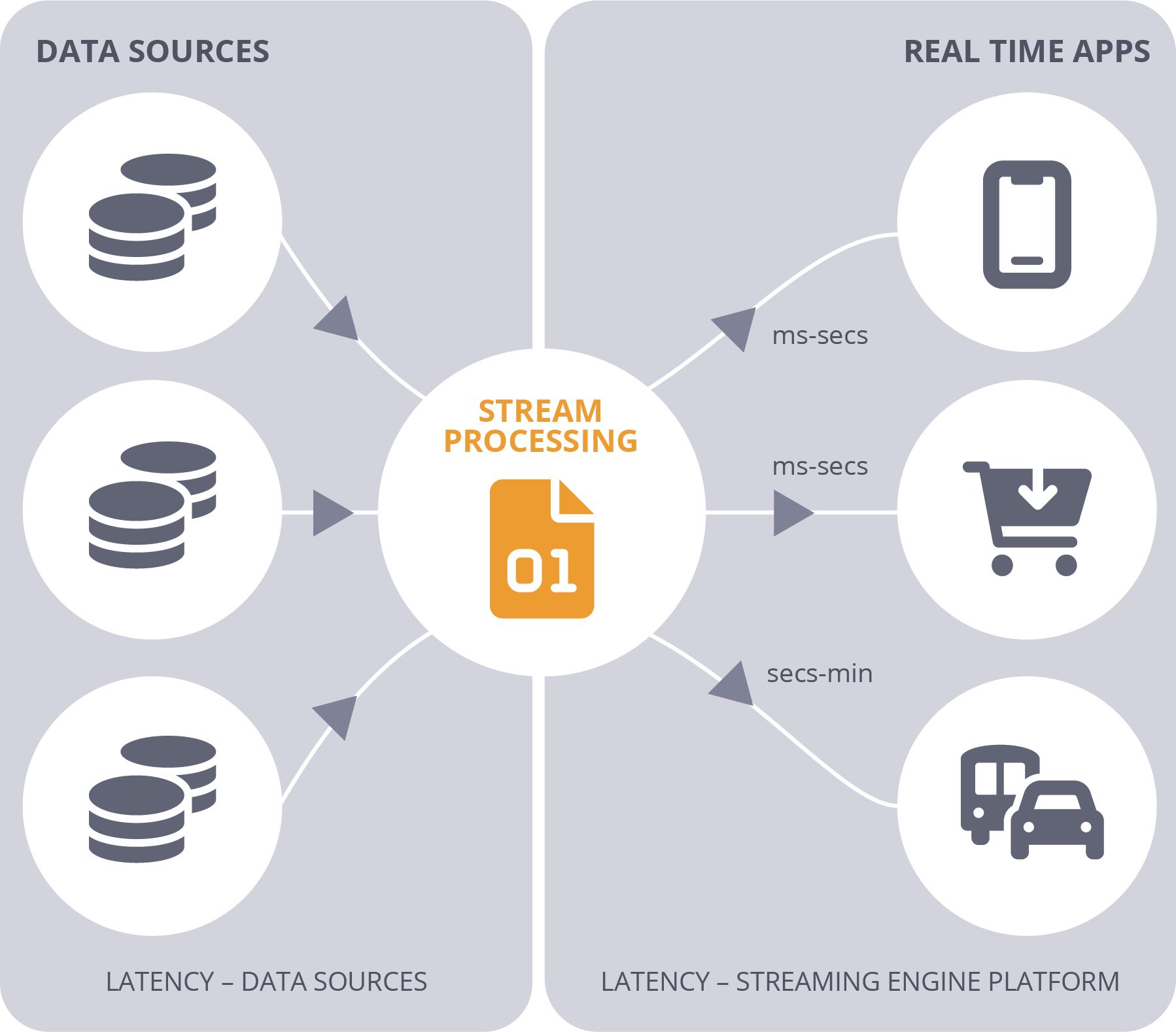

Real-time data has the potential to provide significant value to businesses. However, it also comes with an expiration date. If this data is not utilized within a certain timeframe, its value is lost, and the corresponding decision or action is not taken. Such data is continuously generated and delivered rapidly, making it known as streaming data.

Siloed data systems that collect, store, and process data in batches can slow down data scientists and engineers who require quick access to operationalize data across the organization. Batch processing is particularly challenging when reducing latency and delivering powerful computing in real-time. This back-and-forth process between systems can leave companies one step behind their customers at every turn.

A graphical look into real time

A graphical look into real-time modes

We understand that advanced analytics is a critical component of a successful business strategy. By utilizing predictive modeling, machine learning algorithms, business process automation, and other statistical methods, our team of experts can help your organization analyze data from a variety of sources to gain valuable insights into your business operations.

Our advanced analytics solutions enable you to not only analyze historical and current data but also to predict patterns and estimate the likelihood of future events. By doing so, you can be more responsive to market changes, identify opportunities for growth, optimize your operations, and ultimately make more informed decisions.

We believe that advanced analytics is more than just a methodology, it is a way to gain a competitive edge in today’s fast-paced business environment. Our team is proficient in a variety of advanced analytics tools and techniques, including but not limited to predictive modeling, machine learning, and business process automation.

Predictive Analytics

Data Visualization

Interactive visual representations of abstract data to enhance human cognition.

What it is

Data solutions don’t have to be complex, difficult to develop, or maintain. Our team of architects and engineers can assist you in designing and implementing a multi-cloud, on-site, or hybrid architecture. This architecture will enable you to ingest and serve your data using the best components for each scenario, whether it’s streaming or batch, structured or semi-structured data, Big Data, document or relational databases.

By leveraging this data architecture, businesses can easily deploy new code and infrastructure while scalingup, out, and down through automated pipelines, ensuring optimal performance while being cost-efficient.

We deliver solutions that provide an effective way to trace, tag, and map your data, resulting in improveddata lineage while implementing best practices for security access and roles.

With extensive experience in open-source and cloudservices, our team is proficient in Docker andKubernetes, Apache Spark, Apache Kafka, Confluent, Starburst, Databricks, CosmosDB, Cassandra, MongoDB, MinIO, Singlestore, Azure Data Factory, Airflow, PowerBI, and other related tools.

We can assist you in building a better solution that suits yourspecific needs.

Data Democratization

In today’s data-driven world, businesses are constantly inundated with data. To fully leverage the potential of this data, organizations must embrace the concept of data democratization. This involves making data easily accessible to all stakeholders in a seamless and understandable manner so that everyone can work with data confidently, feel comfortable discussing it, and make informed decisions.

Data democratization is an ongoing process that ensures data is readily available to all individuals within an organization, regardless of their technical expertise. By empowering stakeholders with access to data, businesses can foster a culture of data-driven decision-making, which can ultimately lead to improved business outcomes.